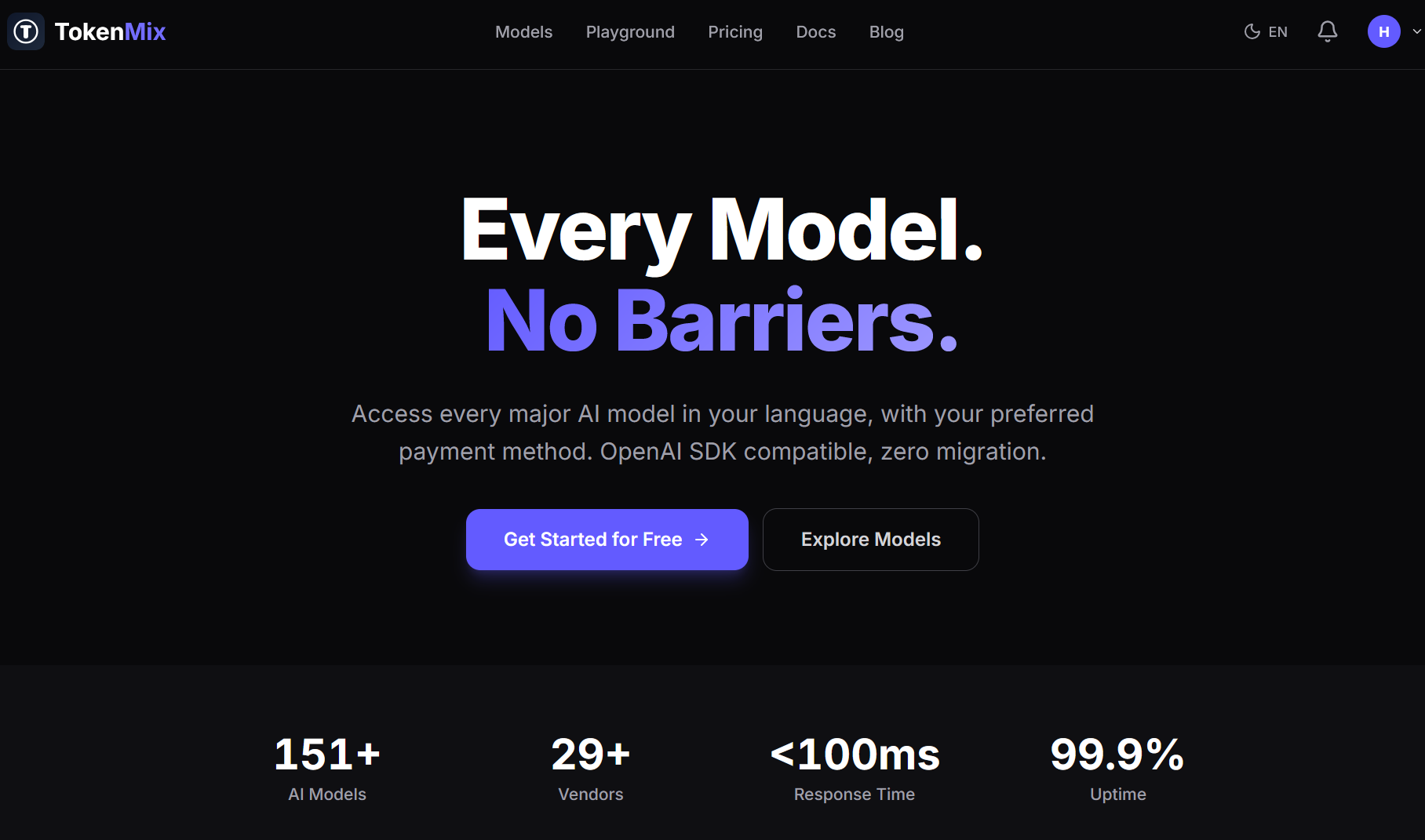

TokenMix

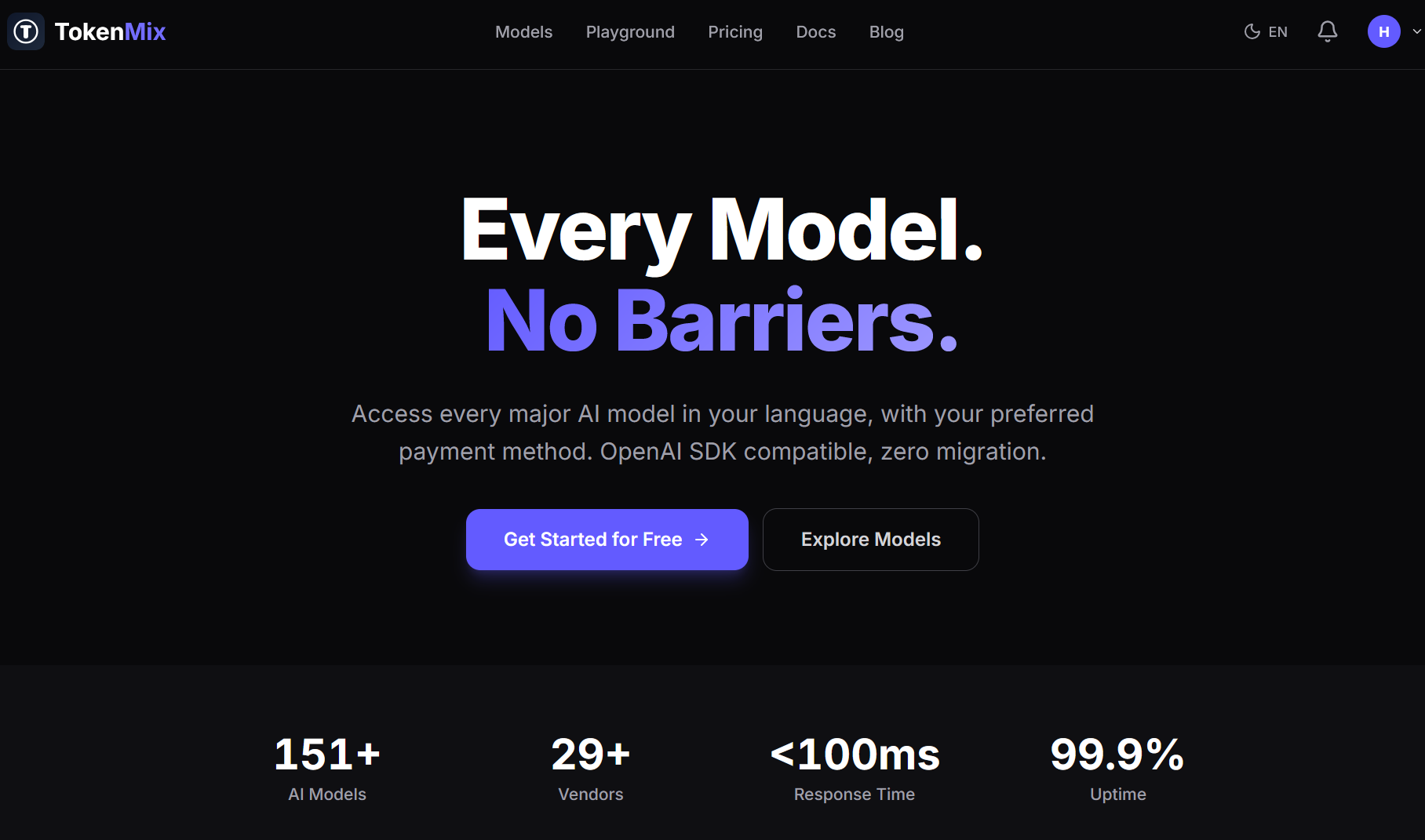

Unified API for 150+ AI Models — One Endpoint, All Providers

TokenMix is a unified API gateway that gives developers access to over 150 AI models through a single OpenAI-compatible endpoint. It connects to providers including OpenAI, Anthropic, Google, Meta, Mistral, and DeepSeek, covering text generation, image creation, video, audio, and embeddings.

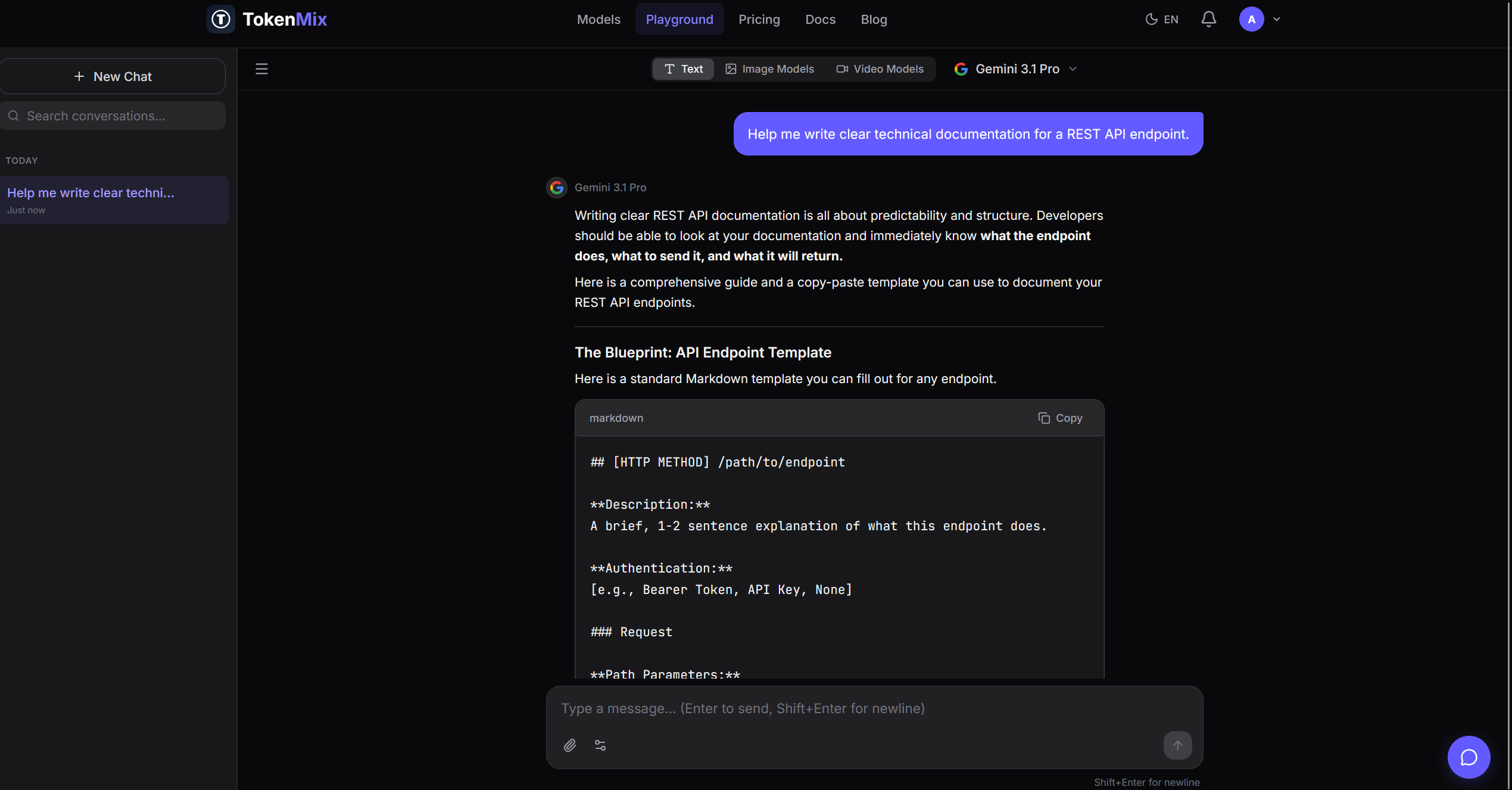

The core idea is simple: instead of juggling multiple API keys, SDKs, and billing accounts, developers point their existing OpenAI-based code to TokenMix and switch models by changing one parameter. No SDK migration, no new authentication flow, no separate billing setup per provider.

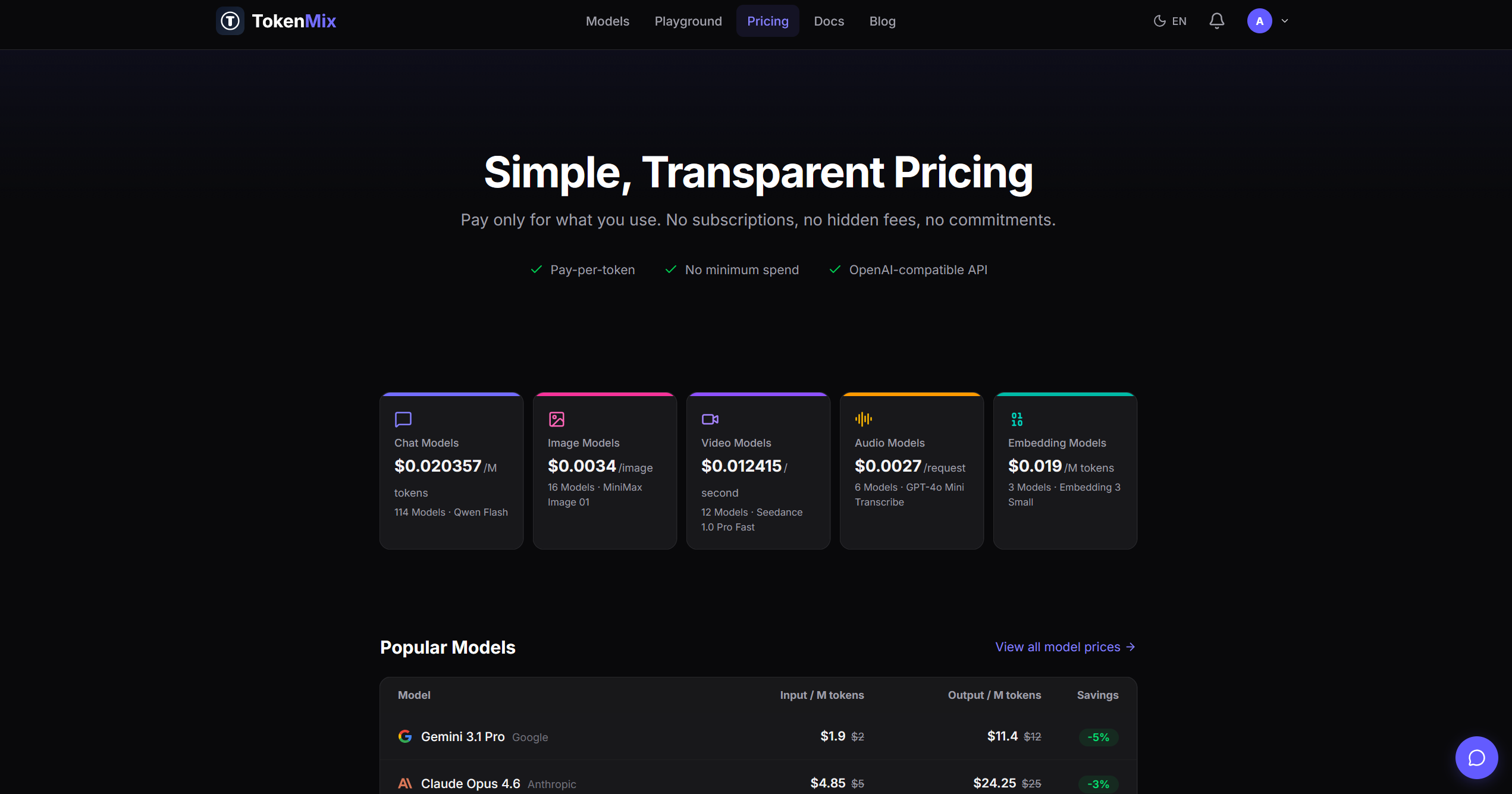

Pricing is strictly pay-as-you-go. There are no monthly subscriptions or minimum commitments. Each model has its own per-token rate, and the platform includes a pricing comparison page where developers can evaluate cost differences across providers before making API calls.

The platform also provides a usage dashboard for monitoring spend and request volume across all models in one view. For production workloads, the routing layer supports failover — if a provider goes down, traffic can be redirected to an alternative model automatically.

TokenMix is primarily built for developers and startups that need to benchmark, compare, or switch between AI models without the overhead of integrating each provider individually.

Features

Unified API access to 150+ AI models from OpenAI, Anthropic, Google, Meta, Mistral, DeepSeek

Full OpenAI SDK compatibility — switch models by changing one parameter

Pay-per-token pricing, no subscriptions

Real-time pricing comparison across providers

Automatic failover and load balancing

Multi-modal: text, image, video, audio, embeddings

Unified billing and usage dashboard

Use Cases

Compare LLM performance and cost across providers without separate integrations

Swap models in production with a one-line code change

Build provider-redundant applications with automatic failover

Optimize API spend by routing tasks to the cheapest qualified model

Comments

Built TokenMix because I got tired of managing five different API keys, five billing dashboards, and rewriting integration code every time a new model dropped. When DeepSeek R1 came out, I spent a whole day just setting up their SDK and auth flow — only to find out the model wasn't great for my use case. That was the breaking point. The idea was simple: one endpoint, one API key, one bill. Point your existing OpenAI code at TokenMix, change the model name, done. No new SDK, no new billing account, no migration project. Right now it supports 150+ models. Every time a new model launches, it's available through the same endpoint developers already use. No more integration tax for trying something new.

Premium Products

Sponsors

BuyMakers

Makers

Comments

Built TokenMix because I got tired of managing five different API keys, five billing dashboards, and rewriting integration code every time a new model dropped. When DeepSeek R1 came out, I spent a whole day just setting up their SDK and auth flow — only to find out the model wasn't great for my use case. That was the breaking point. The idea was simple: one endpoint, one API key, one bill. Point your existing OpenAI code at TokenMix, change the model name, done. No new SDK, no new billing account, no migration project. Right now it supports 150+ models. Every time a new model launches, it's available through the same endpoint developers already use. No more integration tax for trying something new.

Premium Products

New to Fazier?

Find your next favorite product or submit your own. Made by @FalakDigital.

Copyright ©2025. All Rights Reserved