RaptorCI

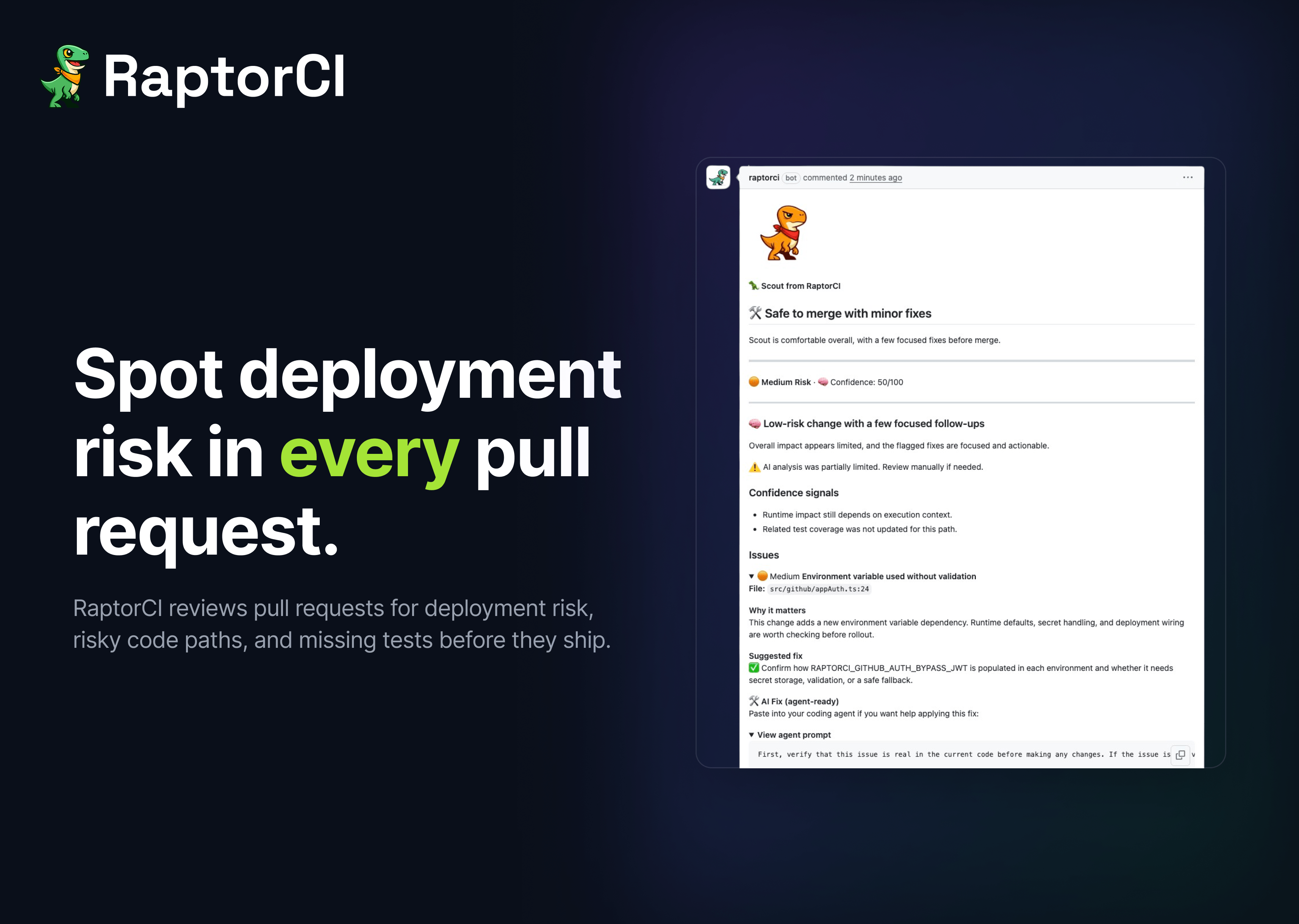

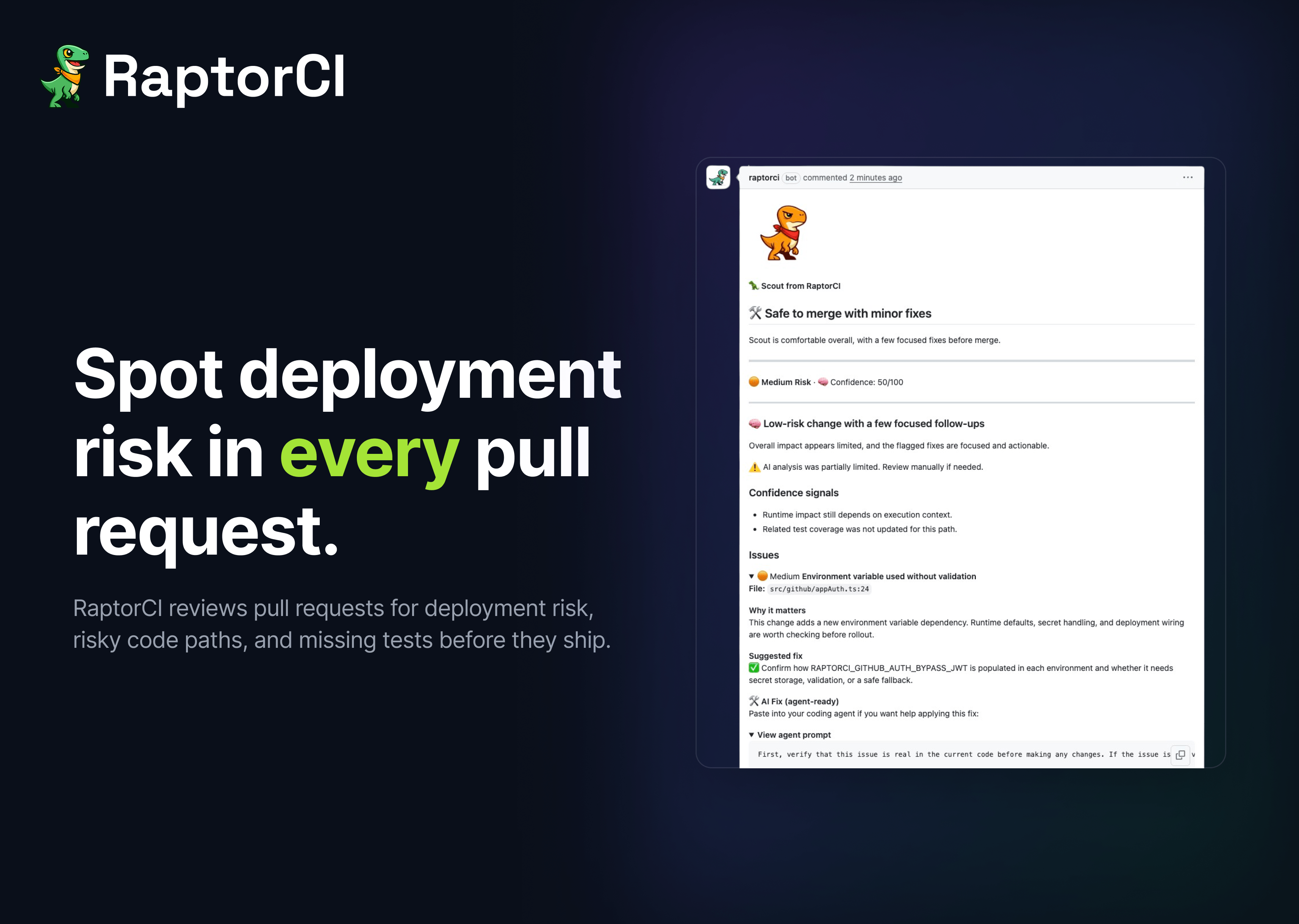

Spot deployment risk in every pull request.

RaptorCI is a GitHub app that surfaces real deployment risk in pull requests before you merge.

Most code review tools focus on style. RaptorCI focuses on impact.

It analyses every PR and highlights what actually matters:

- ⚠️ Risky changes (auth, permissions, config, env vars)

- 🧪 Missing or insufficient test coverage

- 🌍 Runtime and production-impacting changes

- 📊 A clear Deployment Confidence Score

Instead of scanning diffs, you get a clear answer:

Is this safe to ship?

Already used by teams reviewing hundreds of PRs with strong early feedback on signal quality.

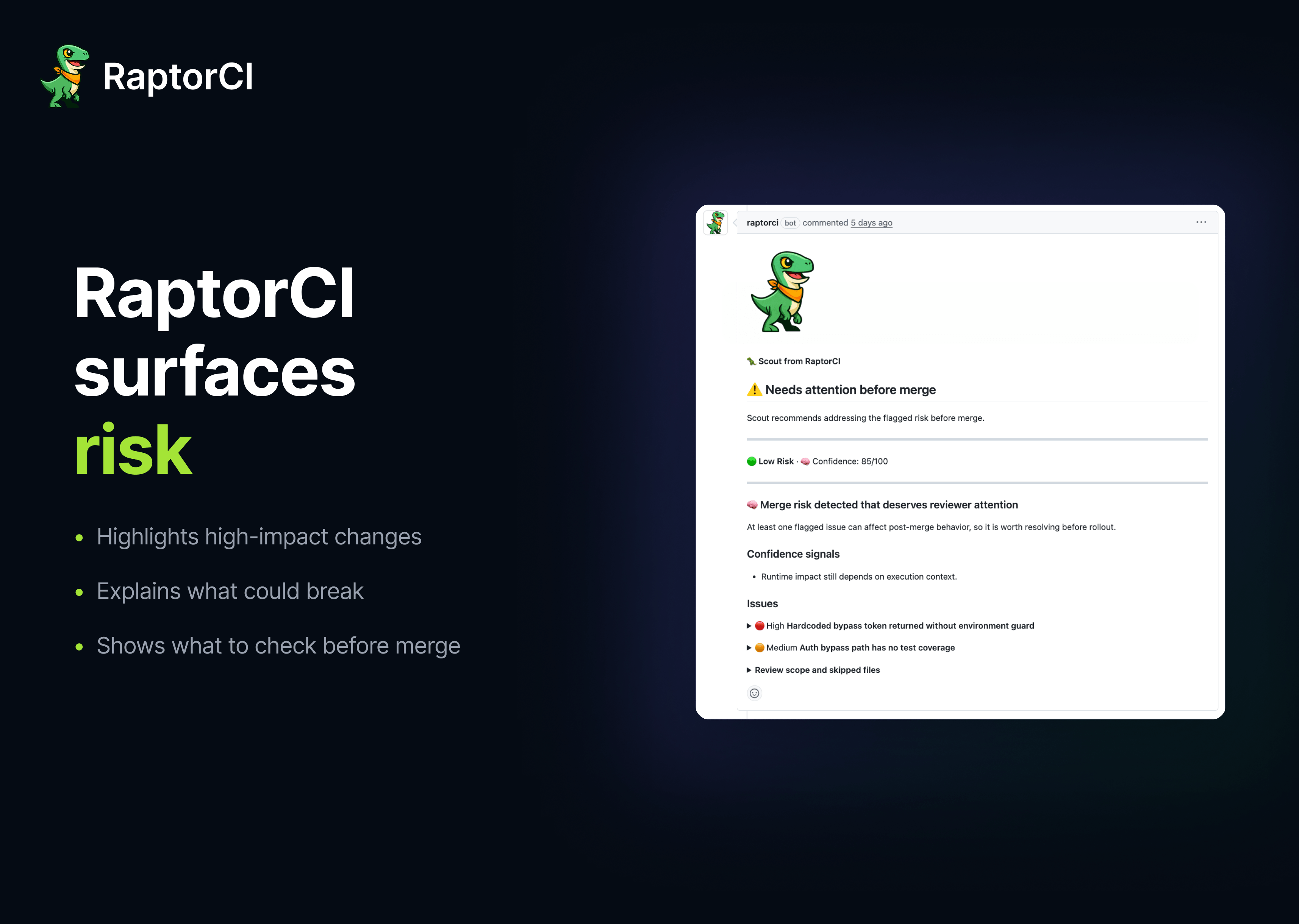

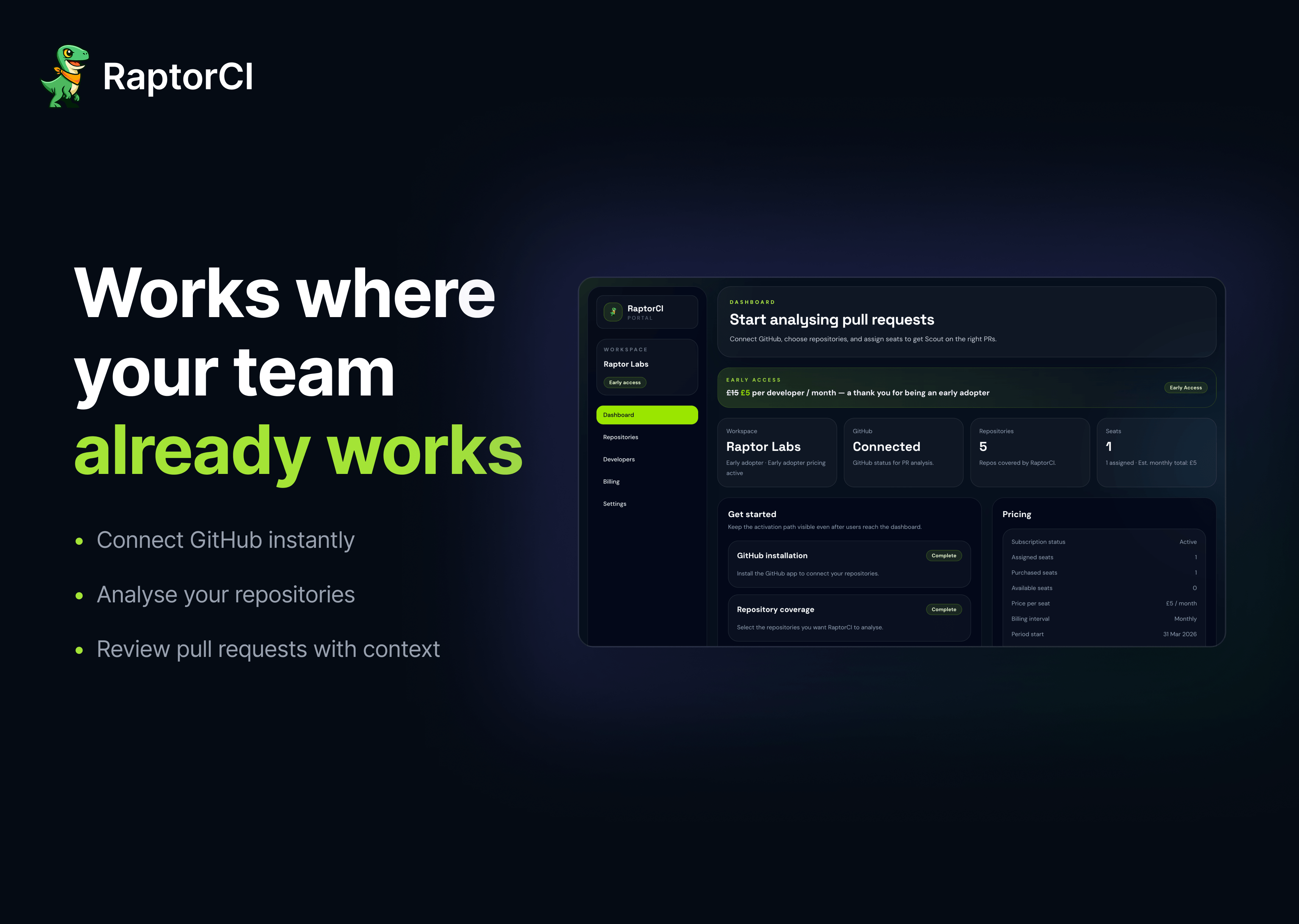

Features

- 🧠 Deployment Confidence Score for every PR

- ⚠️ Detects real risk (auth, permissions, config, env changes)

- 🧪 Flags missing tests on critical code paths

- 🌍 Highlights production-impacting changes

- 🔍 AI finds what humans miss

- 💬 Inline comments with clear fixes

- 📊 Clean summaries, zero noise

- ⚡ Native GitHub integration

- 🔁 Incremental analysis for fast feedback

- 🔒 Built with safety and control in mind

Use Cases

- 🚀 Know if a PR is safe to ship before you merge

- 🔐 Catch security and config risks early

- 🧪 Make sure important changes are properly tested

- 🌍 Avoid production issues from risky changes

- ⚡ Review faster by focusing on what actually matters

- 🤝 Support teams without dedicated reviewers

- 📈 Handle higher PR volume from AI-generated code

- 🧠 Keep review quality consistent across the team

- 🔍 Understand large PRs without digging through everything

- 🛠️ Help new engineers make better changes, faster

Comments

I built RaptorCI after seeing the same problem over and over again in production systems. Code review tools are great at catching style issues, but they don’t really answer the question that matters most: “Is this safe to ship?” At the same time, code is being written faster than ever (a lot of it AI-assisted), and the amount of scrutiny per change is going down. Important risks get buried in diffs, and teams are left guessing. RaptorCI is my attempt to fix that. It focuses on surfacing real deployment risk in pull request, things like auth changes, config drift, missing tests, and runtime impact all while keeping the output clear and actually useful. I’ve been working closely with early teams and we’ve already processed hundreds of PRs, which has helped shape the direction a lot. Still very early, and I’m actively looking for feedback, especially from teams shipping quickly or dealing with large PR volumes. If you try it, I’d genuinely love to hear what feels useful and what doesn’t.

Premium Products

Sponsors

BuyMakers

Makers

Comments

I built RaptorCI after seeing the same problem over and over again in production systems. Code review tools are great at catching style issues, but they don’t really answer the question that matters most: “Is this safe to ship?” At the same time, code is being written faster than ever (a lot of it AI-assisted), and the amount of scrutiny per change is going down. Important risks get buried in diffs, and teams are left guessing. RaptorCI is my attempt to fix that. It focuses on surfacing real deployment risk in pull request, things like auth changes, config drift, missing tests, and runtime impact all while keeping the output clear and actually useful. I’ve been working closely with early teams and we’ve already processed hundreds of PRs, which has helped shape the direction a lot. Still very early, and I’m actively looking for feedback, especially from teams shipping quickly or dealing with large PR volumes. If you try it, I’d genuinely love to hear what feels useful and what doesn’t.

Premium Products

New to Fazier?

Find your next favorite product or submit your own. Made by @FalakDigital.

Copyright ©2025. All Rights Reserved