Musiv - AI Music Video Generator

AI Music Video, AIMV, Music Visualizer, AI MV Generator, Mus

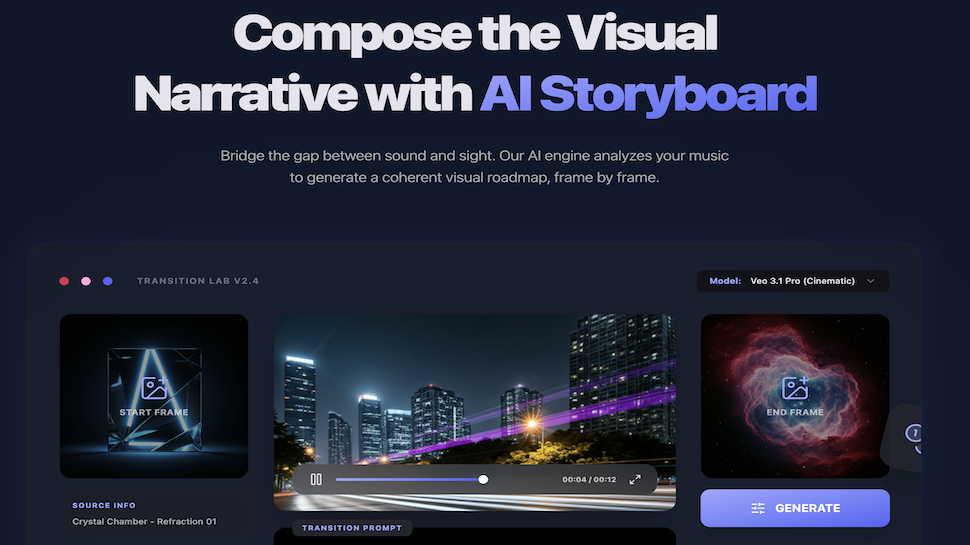

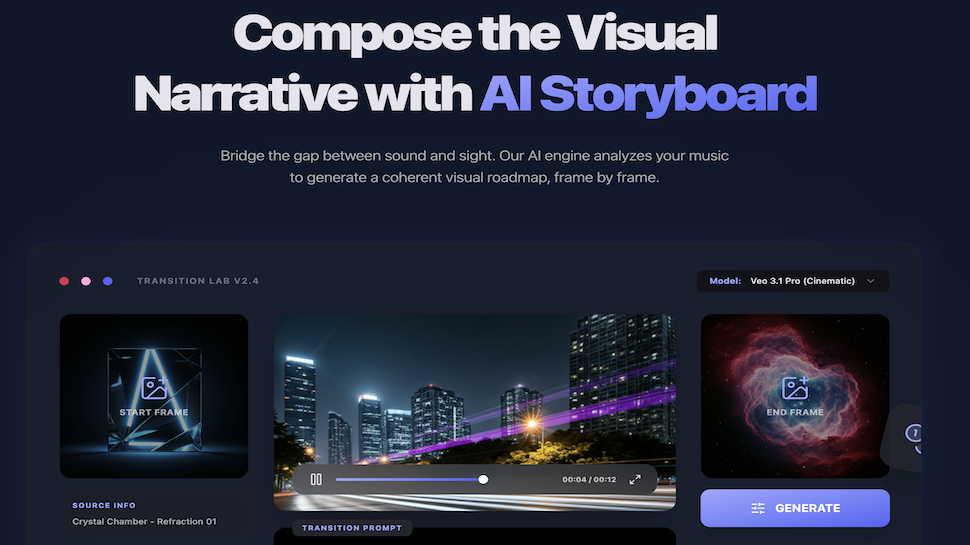

Musiv is an AI platform built for music visualization and fast music video production. Upload an audio file (such as MP3), add a simple prompt, and Musiv analyzes rhythm, energy, and emotional changes in your track. The system then generates matching storyboards and renders synchronized video segments with high visual quality. It is ideal for independent artists and content creators who need to turn audio into compelling videos quickly, without advanced editing skills.

Features

AI audio analysis for rhythm, energy, and mood

Automated storyboard generation from music

Custom visual styles and character options

Fast rendering for synchronized music video segments

Beginner-friendly workflow with no pro editing required

Use Cases

Musicians use Musiv for promo MVs and social teasers without a full crew. Short-form and live creators use it for backgrounds and clips on TikTok, Shorts, Reels, and streams. Channels and brands use it for instrumental visuals plus event openers, bumpers, and rough cuts. Education and portfolios use it for demos and reels from a single audio upload.

Sponsors

BuyComments

We built Musiv because a lot of indie musicians and creators ship great audio but not a full MV—budget and time get in the way. The idea was simple: have AI “read” the track like a director—tempo, energy, mood—then turn that into storyboard and visuals without asking anyone to master After Effects first. Under the hood we pair audio analysis with LLM storyboarding and video models, then assemble segments so the output feels locked to the music. We’d love honest feedback: what would make this a must-have in your workflow—latency, character control, something else? Comment below; we read everything.

Premium Products

Sponsors

BuyMakers

Makers

Comments

We built Musiv because a lot of indie musicians and creators ship great audio but not a full MV—budget and time get in the way. The idea was simple: have AI “read” the track like a director—tempo, energy, mood—then turn that into storyboard and visuals without asking anyone to master After Effects first. Under the hood we pair audio analysis with LLM storyboarding and video models, then assemble segments so the output feels locked to the music. We’d love honest feedback: what would make this a must-have in your workflow—latency, character control, something else? Comment below; we read everything.

Premium Products

New to Fazier?

Find your next favorite product or submit your own. Made by @FalakDigital.

Copyright ©2025. All Rights Reserved