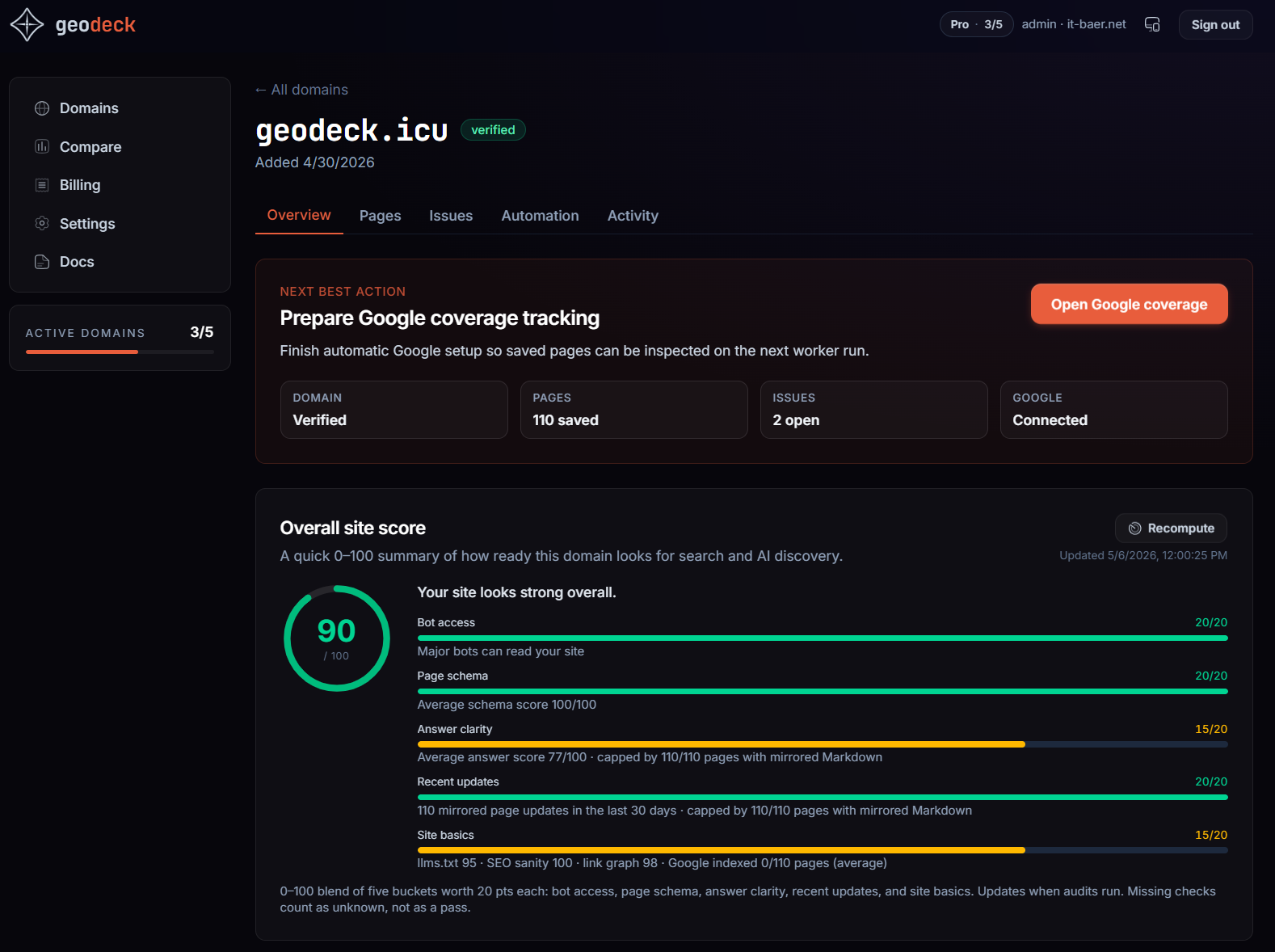

geodeck - SEO/GEO Optimization Engine

Keeping you visible for AI and Search

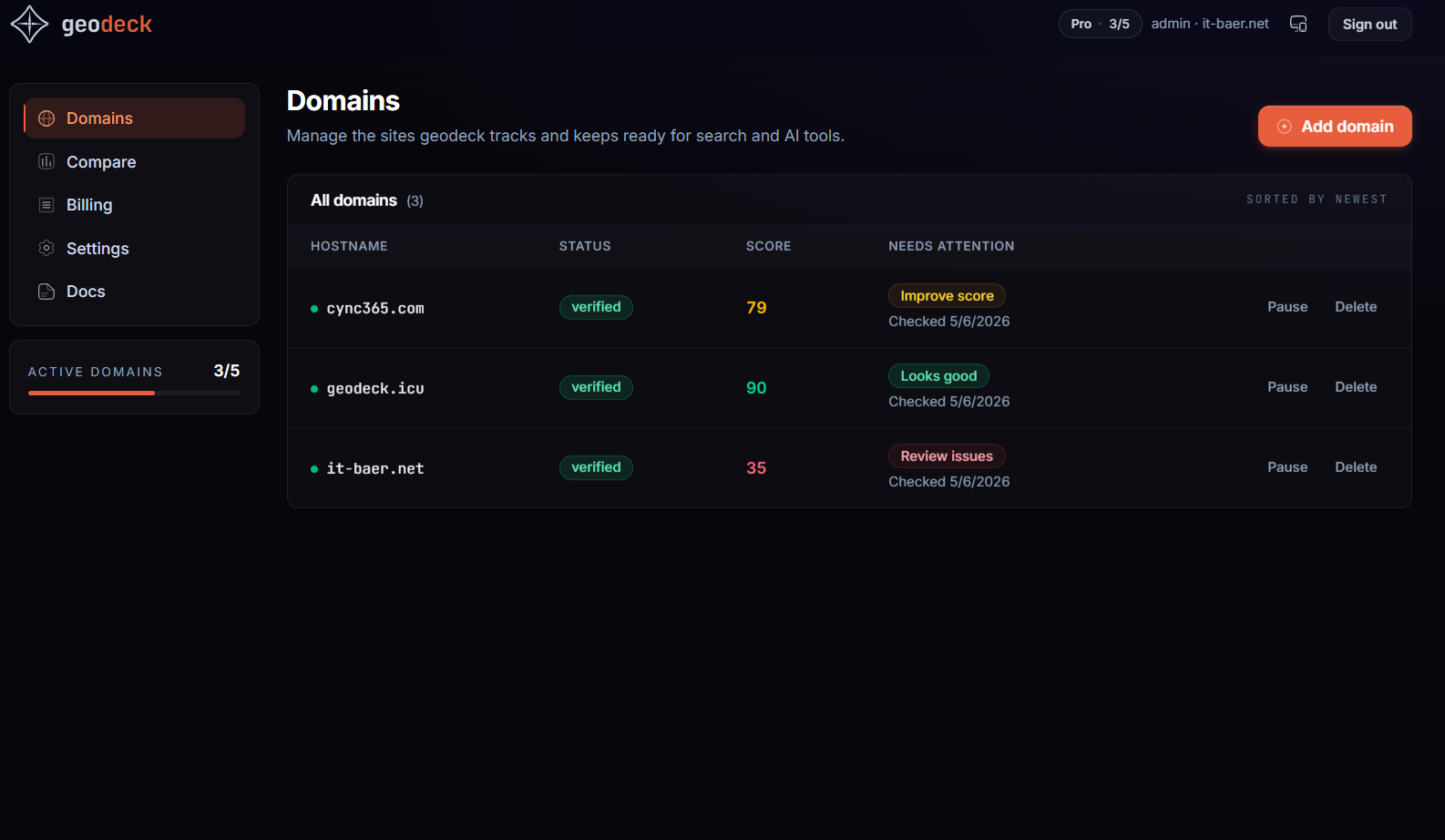

geodeck keeps websites ready for AI crawlers and search engines every time content changes. Connect one signed webhook and it automatically refreshes llms.txt, llms-full.txt, Markdown mirrors, sitemap.xml, RSS feeds, and search-notification pings. It works with WordPress, Ghost, Webflow, Sanity, Strapi, Hashnode, Next.js, and custom stacks, and adds visibility scoring, crawl audits, and indexing workflows so discovery signals stay fresh without manual busywork.

Comments

I built geodeck because too much AI and search visibility work still happens as manual cleanup after every content update. A new page goes live, and then someone has to remember to refresh llms.txt, mirrors, feeds, sitemaps, and indexing signals across multiple tools. I wanted that whole chain to start from one signed publish webhook and stay automatic. The goal is simple: make websites continuously ready for AI crawlers and search engines without adding more operational busywork. I’d especially love feedback on the onboarding flow, the webhook setup, and which integrations or reporting details would make this genuinely useful in real production stacks.

Premium Products

Sponsors

BuyMakers

Makers

Comments

I built geodeck because too much AI and search visibility work still happens as manual cleanup after every content update. A new page goes live, and then someone has to remember to refresh llms.txt, mirrors, feeds, sitemaps, and indexing signals across multiple tools. I wanted that whole chain to start from one signed publish webhook and stay automatic. The goal is simple: make websites continuously ready for AI crawlers and search engines without adding more operational busywork. I’d especially love feedback on the onboarding flow, the webhook setup, and which integrations or reporting details would make this genuinely useful in real production stacks.

Premium Products

New to Fazier?

Find your next favorite product or submit your own. Made by @FalakDigital.

Copyright ©2025. All Rights Reserved