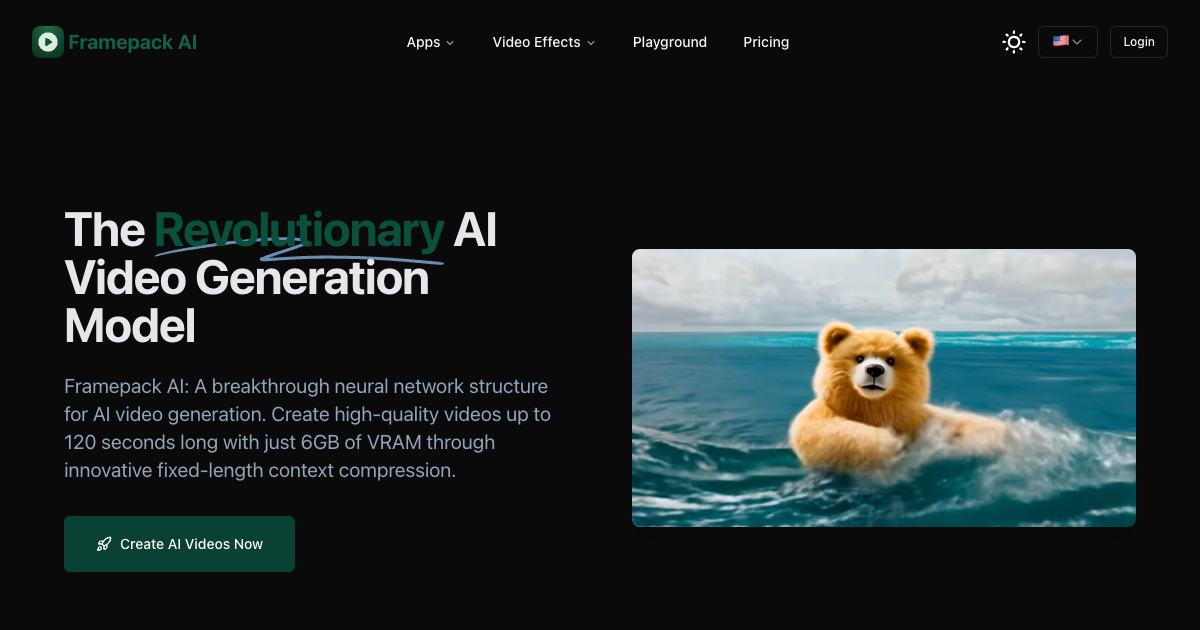

Framepack AI

Open-source,next-gen video generation using frame prediction

Comments

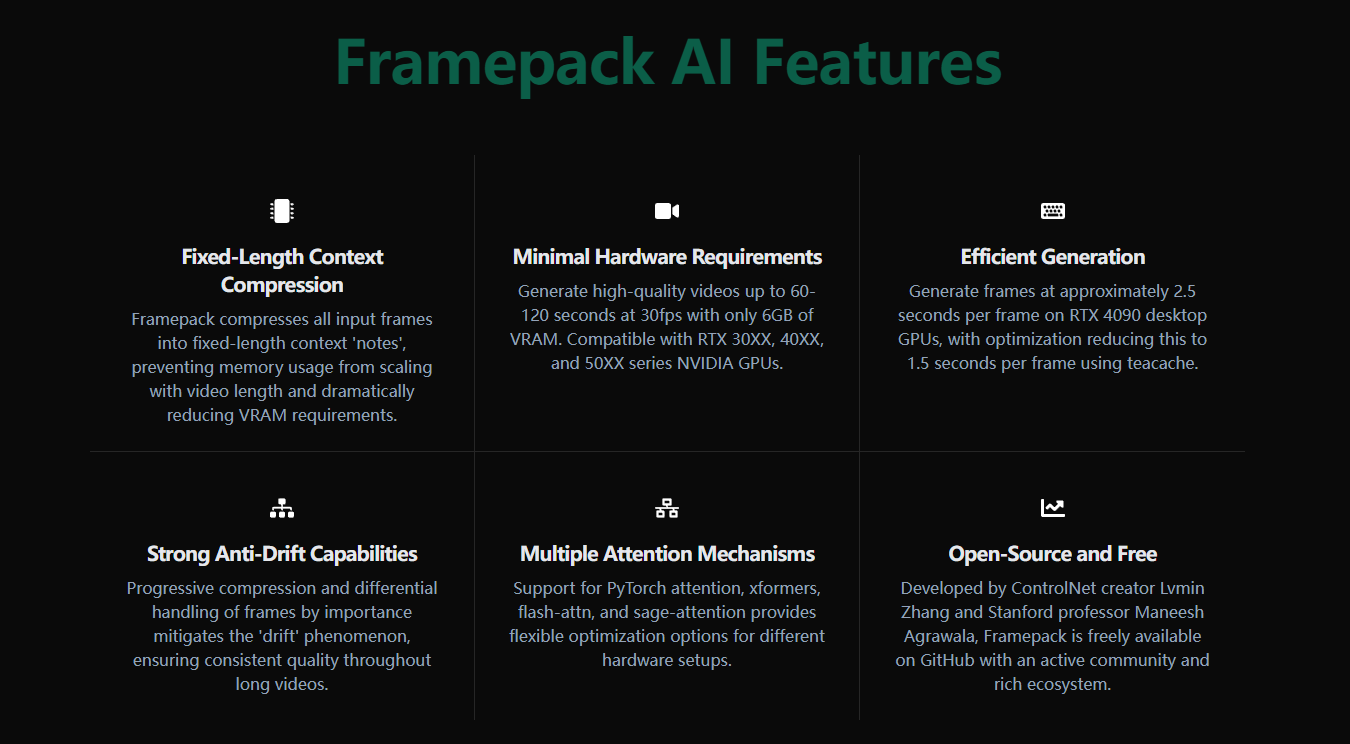

This is incredibly promising — using frame prediction for next-gen video generation opens up a lot of creative and technical possibilities, especially with an open-source approach. A few things I’m curious about: How does Framepack AI handle temporal consistency and motion coherence in longer clips? Is there any support for conditioning (e.g. text prompts, reference frames, or audio)? Also love that it’s open-source — huge for transparency and experimentation. Looking forward to seeing how the community builds on this and where performance stands compared to tools like Sora or Runway. Great work — this could be a game-changer for indie creators and researchers alike.

Premium Products

Sponsors

BuyAwards

View allAwards

View allMakers

Makers

Comments

This is incredibly promising — using frame prediction for next-gen video generation opens up a lot of creative and technical possibilities, especially with an open-source approach. A few things I’m curious about: How does Framepack AI handle temporal consistency and motion coherence in longer clips? Is there any support for conditioning (e.g. text prompts, reference frames, or audio)? Also love that it’s open-source — huge for transparency and experimentation. Looking forward to seeing how the community builds on this and where performance stands compared to tools like Sora or Runway. Great work — this could be a game-changer for indie creators and researchers alike.

Premium Products

New to Fazier?

Find your next favorite product or submit your own. Made by @FalakDigital.

Copyright ©2025. All Rights Reserved