Answerr AI

Answerr is an AI platform for education built for universiti

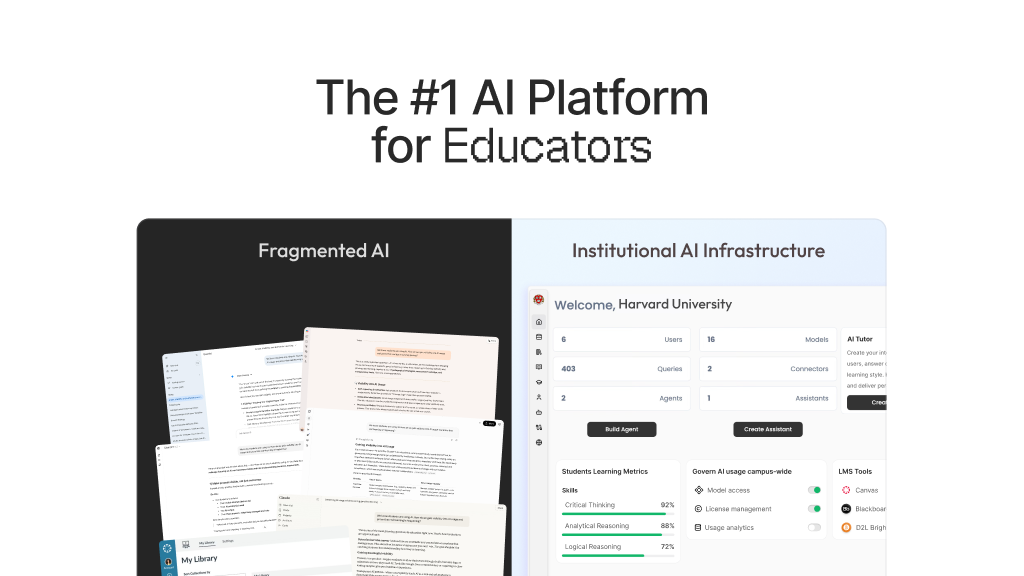

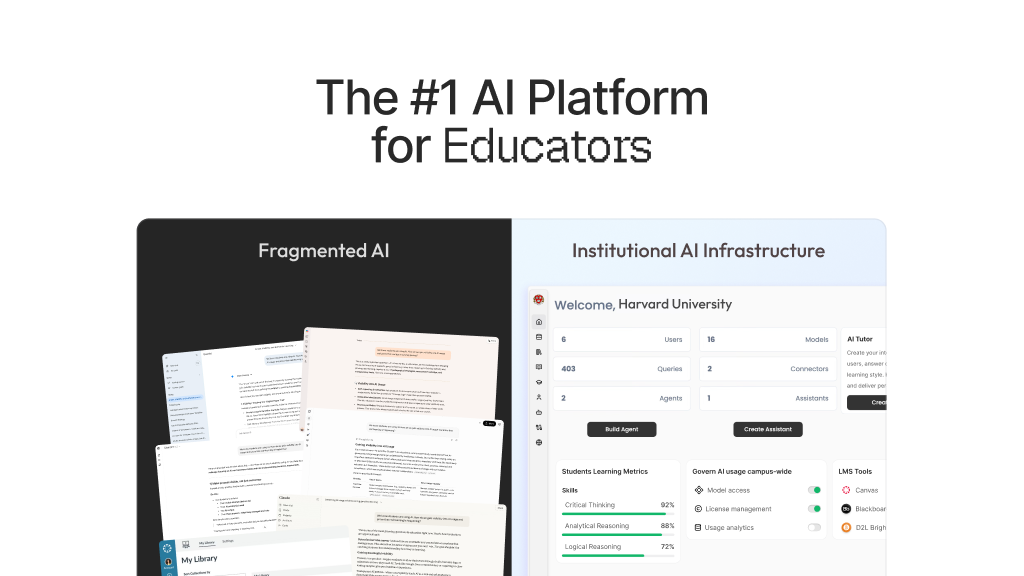

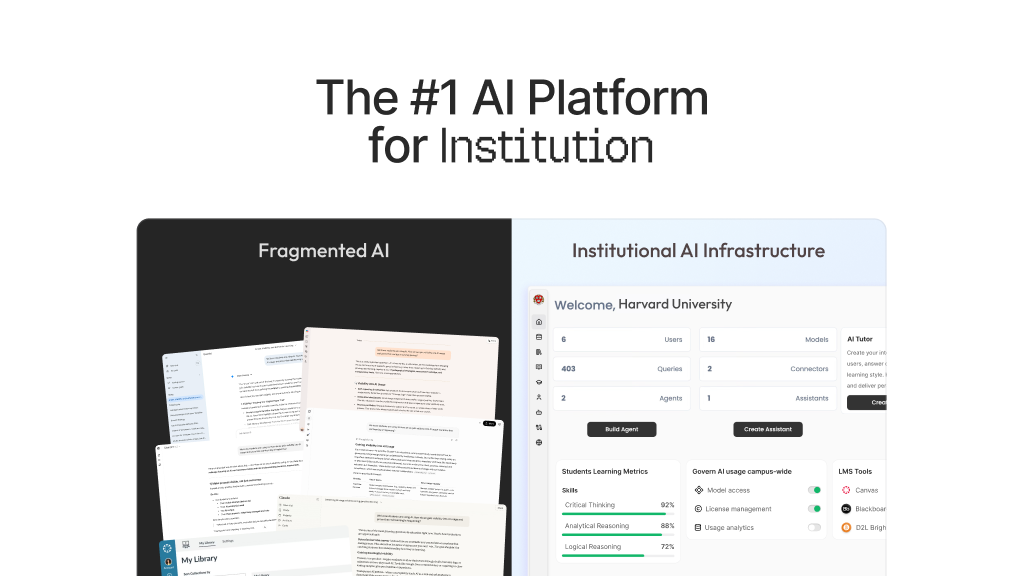

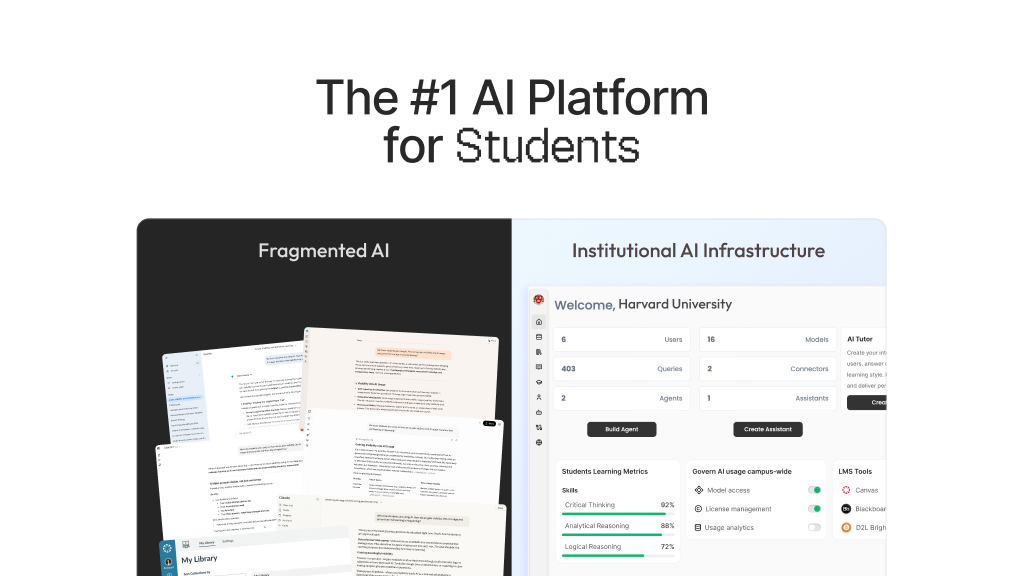

Answerr AI provides a comprehensive AI platform designed specifically for educational institutions. It includes an AI tutor that helps students ask questions and receive personalized explanations in real time. The platform allows institutions to upload documents, PDFs, and course materials to train AI assistants on institutional knowledge, enabling accurate responses grounded in trusted sources. Answerr AI also offers deep research capabilities that generate summaries, insights, and citations to support academic work. Educators can automatically create quizzes, assignments, and grading rubrics, while AI-powered feedback tools help generate student reports and learning insights. The platform includes analytics dashboards that track engagement and learning progress, helping institutions better understand student needs. Answerr AI supports integrations with major learning management systems and campus tools, allowing universities to deploy AI assistants across websites, LMS platforms, and internal systems. Built with enterprise governance and education compliance in mind, Answerr AI helps institutions securely adopt AI while improving knowledge access, student support, and overall learning experiences.

Features

Answerr AI can be used across multiple educational scenarios to improve access to knowledge and streamline institutional workflows. Universities can deploy AI assistants on their websites to answer admissions questions, provide information about programs, and guide prospective students through application processes. Within learning management systems, students can use the AI tutor to ask questions about course materials, assignments, and lecture content. Educators can use the platform to generate quizzes, grading rubrics, and feedback reports based on course materials. Support teams can automate responses to common student inquiries related to policies, deadlines, and campus services. Institutions can also create internal AI assistants to help faculty and staff quickly access documentation, policies, and administrative resources. By centralizing institutional knowledge and making it accessible through AI-powered conversations, Answerr AI helps universities improve student support, reduce repetitive workloads, and enhance the overall digital learning experience.

Use Cases

- AI tutor to help students ask questions and understand course materials instantly

- AI assistant on university websites to answer admissions and program questions

- Knowledge assistant trained on institutional documents, policies, and FAQs

- Support tool for answering common student inquiries about deadlines, courses, and campus services

- Quiz and assessment generation based on uploaded course materials

- Automatic rubric creation for grading assignments and evaluations

- AI-powered feedback reports to help educators assess student performance

- Internal AI assistant for faculty and staff to quickly access institutional knowledge

- Research support for students with AI-generated summaries and insights

- AI assistants integrated into LMS platforms to support learning workflows

Comments

The institutional angle is what makes this stand out from generic AI chatbots. Training on institutional documents and course materials means responses stay grounded and accurate — which is critical in education where hallucinations can be genuinely harmful. Would love to know how you handle cases where uploaded documents conflict with each other, especially in large university settings with many departments.

What sets this apart from typical AI chatbots is its institutional focus. By training on official documents and course materials, it keeps responses accurate and reliable—something especially important in education, where errors can be harmful. I’m curious how you manage situations where uploaded documents contradict each other, particularly in large universities with multiple departments.

What’s particularly strong here is the focus on grounding AI responses in institutional documents rather than relying on general-purpose models. In an academic setting, that shift from “broad knowledge” to “source-constrained accuracy” is critical, especially when students depend on the system for coursework and assessments. The integration across LMS platforms, websites, and internal systems also suggests a thoughtful approach to distribution. Instead of treating AI as a standalone tool, it’s being embedded directly into existing workflows, which is often the difference between adoption and abandonment in universities. One area worth exploring further is how the system handles conflicting or outdated institutional content. In large universities, policies and course materials can vary significantly across departments or change mid-term. Having a clear hierarchy of sources, version control, or even surfacing citations to users could help maintain trust and transparency. Additionally, the analytics layer has real potential, but its impact will depend on how actionable those insights are. For example, can educators identify specific knowledge gaps at the cohort level, or is it more high-level engagement tracking? More granular, intervention-ready insights could make this especially valuable for improving learning outcomes.

Answerr AI tackles a real pain point in higher education — generic AI chatbots that can't be trusted for institution-specific content. Grounding responses in uploaded course materials and rubrics is the right approach. The quizzes, grading support and analytics dashboards make this genuinely useful for large deployments. Would be interested to know how hallucinations are handled when source documents are ambiguous or incomplete.

Really interesting approach to institutional AI adoption. The ability to train assistants on specific course materials and institutional docs is a strong differentiator compared to generic chatbots. I'd be curious to know how you handle accuracy validation — do educators have a way to review and correct AI responses before they go live to students? That feedback loop seems critical for maintaining trust in an academic setting.

Premium Products

Sponsors

BuyMakers

Makers

Comments

The institutional angle is what makes this stand out from generic AI chatbots. Training on institutional documents and course materials means responses stay grounded and accurate — which is critical in education where hallucinations can be genuinely harmful. Would love to know how you handle cases where uploaded documents conflict with each other, especially in large university settings with many departments.

What sets this apart from typical AI chatbots is its institutional focus. By training on official documents and course materials, it keeps responses accurate and reliable—something especially important in education, where errors can be harmful. I’m curious how you manage situations where uploaded documents contradict each other, particularly in large universities with multiple departments.

What’s particularly strong here is the focus on grounding AI responses in institutional documents rather than relying on general-purpose models. In an academic setting, that shift from “broad knowledge” to “source-constrained accuracy” is critical, especially when students depend on the system for coursework and assessments. The integration across LMS platforms, websites, and internal systems also suggests a thoughtful approach to distribution. Instead of treating AI as a standalone tool, it’s being embedded directly into existing workflows, which is often the difference between adoption and abandonment in universities. One area worth exploring further is how the system handles conflicting or outdated institutional content. In large universities, policies and course materials can vary significantly across departments or change mid-term. Having a clear hierarchy of sources, version control, or even surfacing citations to users could help maintain trust and transparency. Additionally, the analytics layer has real potential, but its impact will depend on how actionable those insights are. For example, can educators identify specific knowledge gaps at the cohort level, or is it more high-level engagement tracking? More granular, intervention-ready insights could make this especially valuable for improving learning outcomes.

Answerr AI tackles a real pain point in higher education — generic AI chatbots that can't be trusted for institution-specific content. Grounding responses in uploaded course materials and rubrics is the right approach. The quizzes, grading support and analytics dashboards make this genuinely useful for large deployments. Would be interested to know how hallucinations are handled when source documents are ambiguous or incomplete.

Really interesting approach to institutional AI adoption. The ability to train assistants on specific course materials and institutional docs is a strong differentiator compared to generic chatbots. I'd be curious to know how you handle accuracy validation — do educators have a way to review and correct AI responses before they go live to students? That feedback loop seems critical for maintaining trust in an academic setting.

Premium Products

New to Fazier?

Find your next favorite product or submit your own. Made by @FalakDigital.

Copyright ©2025. All Rights Reserved